Researchers from RAND, Brown University School of Public Health and Harvard report that young people are turning to generative AI tools, like ChatGPT, for mental health advice at unexpectedly high rates.

About one in eight U.S. adolescents and young adults in the U.S. are turning to AI chatbots for mental health advice, with use most common among those ages 18 to 21, according to a new study in JAMA Network Open.

The study — co-authored by researchers from Brown University School of Public Health, Harvard Medical School and RAND, a nonprofit research organization — provides the first nationally representative estimates of how often adolescents and young adults rely on generative AI, such as ChatGPT, for help when feeling sad, angry or nervous. It was led by Jonathan Cantor, a senior policy researcher at RAND.

“There has been a lot of discussion that adolescents were using ChatGPT for mental health advice, but to our knowledge, no one had ever quantified how common this was,” said Ateev Mehrotra, professor at the Brown University School of Public Health and a coauthor of the study.

The researchers surveyed 1,058 adolescents and young adults ages 12 to 21 between February and March 2025. Among those who used chatbots for mental health advice, two-thirds engaged at least monthly and more than 93% said the advice was helpful. Usage was even higher among young adults with roughly one in five respondents ages 18 to 21 reporting using large language models for mental health support.

“I think the most striking finding was that already, in late 2025, more than 1 in 10 adolescents and young adults were using LLMs for mental health advice and that it was higher among young adults,” Mehrotra said. “I find those rates remarkably high.”

Researchers note that the high utilization likely reflects the low cost, immediacy and perceived privacy of AI-based advice — particularly appealing to youth who may not receive traditional counseling. The findings come at a time when the United States continues to face a youth mental health crisis, with nearly one in five adolescents experiencing a major depressive episode in the past year and 40% receiving no mental health care.

It also comes amid reports that OpenAI is facing seven lawsuits alleging that ChatGPT drove users to delusions and suicide.

“There are few standardized benchmarks for evaluating mental health advice offered by AI chatbots, and there is limited transparency about the datasets that are used to train these large language models,” Cantor said.

The study, which was supported by the National Institute of Mental Health, also identified racial disparities. For example, Black respondents were less likely to report that chatbot advice was helpful, suggesting possible gaps in cultural competency.

The survey did not capture whether the advice was for diagnosed mental illness, and researchers say further work is needed to understand how generative AI affects young people with existing mental health conditions.

“Obviously the key question is how can LLMs be most helpful but at the same time limit their harm,” Mehrotra said. “But it changes my thinking from adolescents might use AI in the future and emphasizes this is already extremely common.”

Since the launch of large language model (LLM) chatbots, use of this form of generative artificial intelligence (AI) has grown rapidly, especially among adolescents and young adults.1 Concurrently, the US is experiencing a youth mental health crisis.2 In the past year, 18% of adolescents aged 12 to 17 years had a major depressive episode; 40% of these received no mental health care.3

It is unclear how many adolescents and young adults use LLM chatbots for advice or help when experiencing emotional distress. We report results from the first nationally representative survey of US adolescents and young adults aged 12 to 21 years examining the prevalence, frequency, and perceived helpfulness of advice from generative AI when feeling sad, angry, or nervous.

Methods

This cross-sectional study was approved by Harvard’s institutional review board and follows the STROBE reporting guideline. Informed consent was collected. Between February and March 2025, we surveyed youths from RAND’s American Life Panel and Ipsos’ KnowledgePanel, which use random sampling from population frames of US households. Produced survey statistics generalize to the population of US English-speaking youths aged 12 to 21 years with internet access. The survey assessed whether respondents had ever used generative AI (yes or no); whether they had sought advice or help from generative AI when feeling sad, angry, or nervous (yes or no; hereafter, mental health advice); frequency of seeking such advice; and perceived helpfulness of such advice.

To ensure comprehension among youths as young as 12 years, the focal question (No. 2) used plainspoken terms describing common emotional states associated with mental health needs (feeling sad, angry, or nervous). We also explicitly asked about generative AI use “for advice or help” in those circumstances. Respondents were given a definition of generative AI and examples (ChatGPT [OpenAI], Gemini [Google AI and DeepMind], and My AI [Snap]). Details on panel construction, survey questions, and methodology are in eMethods 1-3 in Supplement 1.

We calculated survey-weighted percentages and conducted multivariable logistic regression to examine factors associated with generative AI use for mental health advice: respondent age, sex, and race and ethnicity; highest parental education level; parental marital status; and census region. Analyses used Stata version 19.5 (StataCorp).

Results

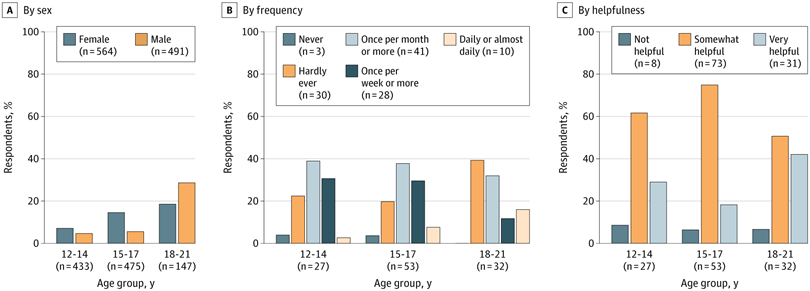

Of 2125 individuals contacted, 1058 responded (response rate, 49.8%; 37.0% aged 18 to 21 years; 50.3% female; 13.0% Black, 25.2% Hispanic, and 51.3% White) (Table). In total, 13.1% of respondents reported using generative AI for mental health advice (Figure), with higher rates among those aged 18 to 21 years (22.2%). Among users, 65.5% sought advice monthly or more often and 92.7% found the advice somewhat or very helpful. All results percentages are weighted.

Table. Descriptive Characteristics of Survey Sample

| Sample characteristic | Participants, No. (%)a | |

|---|---|---|

| Sample size | Weighted sample sizeb | |

| Sex | ||

| Male | 493 (46.6) | 20 456 992 (49.7) |

| Female | 565 (53.4) | 20 687 859 (50.3) |

| Age, y | ||

| 12-14 | 434 (41.0) | 12 338 928 (30.0) |

| 15-17 | 477 (45.1) | 13 594 940 (33.0) |

| 18-21 | 147 (13.9) | 15 210 983 (37.0) |

| Race and ethnicityc | ||

| Black | 103 (9.7) | 5 327 470 (13.0) |

| Hispanic | 258 (24.4) | 10 372 657 (25.2) |

| White non-Hispanic | 576 (54.4) | 21 118 502 (51.3) |

| Other | 121 (11.4) | 4 326 222 (10.5) |

| Parent education | ||

| ≤High school degree | 304 (28.7) | 13 970 346 (34.0) |

| Some college | 314 (29.7) | 13 750 439 (33.4) |

| ≥Bachelor’s degree | 440 (41.6) | 13 424 066 (32.6) |

| Parent current living status | ||

| Married | 702 (66.4) | 21 646 464 (52.6) |

| Separated, divorced, or widowed | 127 (12.0) | 4 129 086 (10.0) |

| Never married | 229 (21.6) | 15 369 300 (37.4) |

| Census region | ||

| Northeast | 203 (19.2) | 6 933 850 (16.9) |

| Midwest | 197 (18.6) | 8 440 499 (20.5) |

| South | 443 (41.9) | 16 333 616 (39.7) |

| West | 215 (20.3) | 9 436 885 (22.9) |

Figure. Use of Generative Artificial Intelligence for Mental Health Advice

Weighted percentages represent respondents reporting in the affirmative to the response category. The number of observations is unweighted. Mental health advice was defined in the survey as “advice or help when feeling sad, angry, or nervous.”

In multivariable regression analyses, generative AI use for mental health advice was higher among youths ages 18 to 21 years (adjusted odds ratio [aOR], 3.99; 95% CI, 1.90-8.34; P < .001) compared with younger adolescents. Black respondents were less likely to report the advice as helpful (aOR, 0.15; 95% CI, 0.04-0.65; P = .01) compared with White non-Hispanic respondents. No other differences were statistically significant.

In this cross-sectional study’s nationally representative survey, we found that 13.1% of US youths, representing approximately 5.4 million individuals, used generative AI for mental health advice, with higher rates (22.2%) among those 18 years and older. Of these 5.4 million users, 65.5% engaged at least monthly and 92.7% found the advice helpful.

High use rates likely reflect the low cost, immediacy, and perceived privacy of AI-based advice, particularly for youths unlikely to receive traditional counseling.4 However, engagement with generative AI raises concerns,5 especially for users with intensive clinical needs, given difficulties in establishing and using standardized benchmarks for evaluating AI-generated mental health advice and limited transparency about the datasets training these models.6 Furthermore, Black respondents reported lower perceived helpfulness, signaling potential cultural competency gaps.

Study limitations include modest sample size, potential nonresponse and survey response biases, and an absence of information on the generative AI used and types of advice sought. Our sample size was particularly small (147 respondents) for ages 18 to 21 years, and findings should be interpreted with caution. Our findings are generalizable only to English speakers aged 12 to 21 years with internet access. Additionally, the survey did not include measures of diagnosed mental illness. Future research should examine use rates among children and youths with mental health conditions and associations with mental health outcomes.

Article Information

Accepted for Publication: August 28, 2025.

Published: November 7, 2025. doi:10.1001/jamanetworkopen.2025.42281

Open Access: This is an open access article distributed under the terms of the CC-BY License. © 2025 McBain RK et al. JAMA Network Open.

Corresponding Author: Jonathan Cantor, PhD, RAND, 1776 Main St, m4246, Santa Monica, CA 90401 (jcantor@rand.org).

Author Contributions: Dr Cantor had full access to all of the data in the study and takes responsibility for the integrity of the data and the accuracy of the data analysis.

Concept and design: McBain, L. Zhang, Burnett, Mehrotra, Yu.

Acquisition, analysis, or interpretation of data: McBain, Bozick, Diliberti, F. Zhang, Kofner, Rader, Stein, Uscher-Pines, Yu.

Drafting of the manuscript: McBain.

Critical review of the manuscript for important intellectual content: McBain, Bozick, Diliberti, L. Zhang, F. Zhang, Burnett, Kofner, Rader, Stein, Mehrotra, Uscher-Pines, Yu.

Statistical analysis: F. Zhang, Kofner.

Obtained funding: Mehrotra, Yu.

Administrative, technical, or material support: McBain, Bozick, L. Zhang, Burnett, Kofner, Yu.

Supervision: McBain, Uscher-Pines, Yu.

Conflict of Interest Disclosures: Dr F Zhang reported receiving grants from Pfizer and GSK plc paid to Harvard Pilgrim Health Care outside the submitted work. Dr Mehrotra reported receiving personal fees from Black Opal Ventures outside the submitted work. Dr Cantor reported receiving grants from the National Institute of Mental Health and National Institute on Aging and personal fees from Chestnut Health, the Aspen Institute, and the US Government Accountability Office outside the submitted work. Dr Yu reported receiving grants from the National Institute of Mental Health, National Institute on Alcohol Abuse and Alcoholism, and National Institute of Nursing Research outside the submitted work.

Funding/Support: Support was provided by grant R01MH132551 from the National Institute of Mental Health.

Role of the Funder/Sponsor: The funder had no role in the design and conduct of the study; collection, management, analysis, and interpretation of the data; preparation, review, or approval of the manuscript; and decision to submit the manuscript for publication.

Disclaimer: The content is solely the responsibility of the authors and does not necessarily represent the views of the National Institute of Mental Health.

Data Sharing Statement: See Supplement 2.

References

Bick A, Blandin A, Deming DJ. The rapid adoption of generative AI. Published online September 2024. Accessed October 9, 2025. https://www.nber.org/system/files/working_papers/w32966/w32966.pdf

Office of the Surgeon General. Protecting Youth Mental Health: The U.S. Surgeon General’s Advisory. US Department of Health and Human Services; 2021. Accessed March 23, 2024. https://www.ncbi.nlm.nih.gov/books/NBK575984/

Substance Abuse and Mental Health Services Administration. Key substance use and mental health indicators in the United States: results from the 2023 National Survey on Drug Use and Health (HHS publication No. PEP24-07-021, NSDUH series H-59). Center for Behavioral Health Statistics and Quality, Substance Abuse and Mental Health Services Administration. 2024. Accessed October 9, 2025. https://www.samhsa.gov/data/report/2023-nsduh-annual-national-report

Lawrence HR, Schneider RA, Rubin SB, Matarić MJ, McDuff DJ, Jones Bell M. The opportunities and risks of large language models in mental health. JMIR Ment Health. 2024;11(1):e59479. doi:10.2196/59479PubMedGoogle ScholarCrossref

Stade EC, Stirman SW, Ungar LH, et al. Large language models could change the future of behavioral healthcare: a proposal for responsible development and evaluation. Npj Ment Health Res. 2024;3(1):12. doi:10.1038/s44184-024-00056-zPubMedGoogle ScholarCrossref

Abbasian M, Khatibi E, Azimi I, et al. Foundation metrics for evaluating effectiveness of healthcare conversations powered by generative AI. NPJ Digit Med. 2024;7(1):82. doi:10.1038/s41746-024-01074-zPubMedGoogle ScholarCrossref